All the tools required for this are publicly available and easy to use, even for amateurs. Film scenes of all kinds can be manipulated and the actors' faces exchanged. A Reddit user recreated the Leia scene from Rogue One with it, without a $ 200 million budget. The technology could have serious consequences.

After a single Redditor caused a stir two months ago with its deceptively real porn fakes of Gal Gadot and other stars and starlets, an entire scene emerged on Reddit. The subreddit r / deepfakes now has over 38 members, many of whom manipulate porn themselves by using an AI to add any face to an existing film. All they need is enough pictures of their unsuspecting target and a few publicly available tools. A Redditor even made an app that even beginners can use to create AI-powered porn.

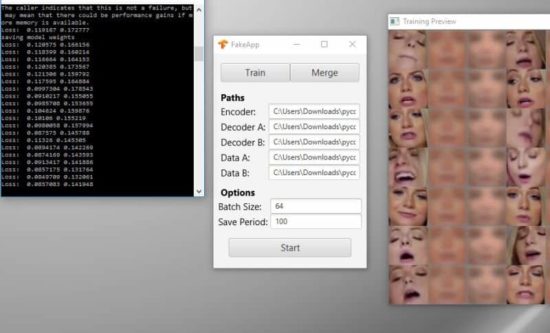

Redditor deepfakeapp developed the so-called FakeApp, an easy-to-use application that allows anyone to manipulate porn. "I think the current version of the app is a good starting point, but I hope to improve it even further in the coming days and months," he said. "I want users to be able to simply select a video from their computer, download a neural network that is fed a specific set of image data from a public archive, and then swap out the face in the video with the push of a button."

For example, Redditor UnobtrusiveBot placed Jessica Alba's face on porn actress Melanie Rios' body using FakeApp. «It was super fast - I'm just learning how to retrain the model. It took about five hours - not bad for the result," he wrote in a comment. Redditor nuttynutter6969 also used the FakeApp to turn Star Wars actress Daisy Ridley into an involuntary porn star.

Most of the posts on r / deepfakes are pornographic in nature, but some users also create videos that prove that there are no limits to technology if you just have the right raw data. The Redditor Z3ROCOOL22, for example, combined recordings of Hitler with the Argentine President Mauricio Macri. According to deepfakeapp anyone who downloads FakeApp and has one or two high quality videos of their target person can create realistic films.

All you need is a good graphics processor with CUDA support. Users who do not have the right processor can also use cloud-based services such as Google Cloud Platform. The entire process from data extraction to conversion to full face swap supposedly takes around eight to twelve hours if done correctly. Some users report that it took them much longer - sometimes with disastrous results.

Deepfakeapp mentions a particularly successful example Video of the user derpfakewho created a FaceSwap of Princess Leia from Rogue One. «Above is the original from Rogue One with a strange computer-animated Carrie Fisher. Budget for the film: 200 million dollars», derpfake describes his video. "Below is the 20 minute manipulation that could have been done with an actress who looks like Carrie Fisher. My budget: $0 and a couple of Fleetwood Mac albums." Instead of animating Princess Leia's face at great expense on the computer, derpfake had fed the software real shots of young Carrie Fisher and inserted them into the film scenes.

Technology that makes it so easy for anyone to fake deceptively real videos, and that is evolving so rapidly, could have serious implications. Because there are no limits to the imagination: Regardless of whether it is revenge porn, manipulated recordings of stars or political speeches, with the necessary raw data and a few open source tools, every recording can be faked. Soon the technology will be so good that it is impossible for us to tell the difference between a real video and an AI-generated fake. Even today, people are finding it increasingly difficult to differentiate between the truth and fake news, and news of all kinds is spreading over social media at lightning speed. What is new is that the technical means are now available to everyone. This underpins the whole principle of trust and credibility. We will probably have to get used to a world in which such video fakes are getting better and better and therefore more difficult to recognize. Including the associated conflicts with the personal rights of the persons concerned. In the future, such forgeries could be used as the basis of propaganda or intentionally misleading news.

"Dravens Tales from the Crypt" has been enchanting for over 15 years with a tasteless mixture of humor, serious journalism - for current events and unbalanced reporting in the press politics - and zombies, garnished with lots of art, entertainment and punk rock. Draven has turned his hobby into a popular brand that cannot be classified.

"Dravens Tales from the Crypt" has been enchanting for over 15 years with a tasteless mixture of humor, serious journalism - for current events and unbalanced reporting in the press politics - and zombies, garnished with lots of art, entertainment and punk rock. Draven has turned his hobby into a popular brand that cannot be classified.